Releasing Kiln - A CMake-compatible build system that can do what CMake can't

See my previous post about dmake about the initial results. This post is a release announcment, postmortem and an overview.

Note: I had to rename the project as one of my friends pointed out that it was conflicting with dmake, the distributed make utility.

As well as the slides for the first public talk I gave at CyCraft C++ club (HTTP only unfortunately, sorry the Gemini folks the slides this time is not suitable for print-to-pdf)

The project idea and initial attempts start 2 years ago where I wanted to improve upon CMake. I wrote a CMake parser, a basic interpreter and debugger in Lua out of all languages (because I am weird). But just never had the time to really push it. Now it is rewritten in C++23 and entire project being possible owes to AI coding agents. They type much faster than I do with a keyboard. VIM helps people to type at the speed of their mind. The AIs type at the token per second they care capable of.

Why a new build system?

The entire reason for having a build system is having compilers is not enough. You need an automated way to tell the compiler how to build your project. Most of us doing C and C++, when we started out have a programming homework scaled beyond a single file and needed a way to automatically compile and link multiple source files together. Usually we end up writing a shell script to automate the process. However, that has downsides that's obvious, it always compiles even if you didn't edit the source code, linking to external libraries is a major pain, etc, etc.. Most of the time people graduate to using Makefiles to avoid unnecessary compiles and switches to Linux so the package manager handles external package. And eventually switches to CMake to not think about the actual compilation process and be more declarative. "here's the source code and I want you think to this and that. Compile!" basically.

However despite CMake's success, it's has some usability pains. And they are interlinked. First, CMake is not a build system; CMake is a build system generator. CMake doesn't directly invoke the compiler to compile your code. Instead CMake generates files that then are consumed by one of the supported build systems, most commonly by make (Makefiles) or by ninja (build.ninja). The process of CMake generating the actual build system files are called "configuration" (I assume influenced by Autotool's configure file). Configuring loses some information - if you ever compute something during configuration, the generated build system often does not know that information have changed and you end up with stale information - this problem most commonly comes up as stale globs or aux_source_directory. The build system (make or ninja) are responsible for looking after the actual compilation steps. But the list of files to be compiled are collected during the configuration phase. Files added to folders are not automatically recognized. CMake's documentation actually warns against use of globs or aux_source_directory for this reason despite it's convenience and smaller projects just ties to list all source files manually; the build systems are still smart enough to know to reconfigure if the CMake source files changes.

Second, CMake (the programming language/interpreter) is slow. This is noticeable but usually ignored because configuration happens very rarely. But the only way to fix both the globbing and stale pre-computed result problem is to always rerun the configuration phase. And that could take seconds in larger projects and is an unacceptable time to wait before say, a single line of compilation happens.

Then, the build systems CMake supports have, in my opinion, terrible interface and experience. Makefile is (I think) the most common generation target. But generated Makefile blocks compilation of a dependent completely if a dependency have not completed linking, even if compiling source files has nothing to do with the shared object. And Ninja forces compilers to not show color in error messages. Yeiks!

These reasons plus others not mentioned is why I wanted to start a new build system. But there's an ecosystem problem. I can have the best build system in the world, but adoption is a harder problem than writing code that makes things run. Unless for very good reasons, people and projects are not going to drop their existing effort and flow just to try out the promise land. Plus the network effect, it's difficult to convince people to use a new build system if consuming other projects means manual finessing with CMake outputs. Whatever I build must take existing CMakeLists.txt as an input.

Also I want to avoid the "yet another standard" problem.

Current state of Kiln

Kiln as a build system works. Namely:

- Works on Linux + GCC

- Basics: incremental builds, dependency tracking, invoking other build systems

- Builds enough projects I throw at it, including but not limited to: qtcore, TBB, Folly, POCO, Vorbis, Opus, DuckDB, MariaDB

- Also CMake builds from Kiln

- Install to install prefix

- Externel projects

- CPM/FetchContent

How does CMake work

What we call CMake is actually 3 things.

- The CMake programming language

- The CMake interpreter/build generator core engine

- The CMake modules (think libraries to help the CMake interpreter to do stuff)

The CMake language

The CMake language is a programming language designed approximately in around the corner of the 2000s that does not follow most of the modern language design guidelines. But the more important thing is CMake achieving the goal it was (I assume) designed to - improve the syntax upon what bash and autotools. While keeping the core string-ey design to allow flexibility and familiarity. In CMake:

- There is only one type - strings

- Arrays (lists) are strings

- Argument substitution affects the parsing result

- Indirect argument substitution allowed

# cmake_strings.cmake

# Lists are strings

list(APPEND LST 1 2 3)

message(STATUS "${LST}") # prints "1;2;3"

# And lists affect the parsing result

message(STATUS ${LST}) # prints "123"

# Even some traditionally syntactic elements

set(OP "EQUALS")

if("1" ${OP} "STREQUAL")

message(STATUS "This works")

endif()

# But horrifically sometimes it seemingly doesn't

# and is not a syntax/interpreter error

if(NOT ${THIS_VARIABLE_DOES_NOT_EXIST})

message(STATUS "I am fine")

endif()

# In direct reference

set(VAR1 "some value")

set(NAME "VAR")

message(STATUS ${${NAME}1}) # Prints "some value"

Running the above code cmake -P cmake_strings.cmake prints the following

❯ cmake -P cmake_strings.cmake

-- 1;2;3

-- 123

-- This works

-- some value

The behaviour is very bash-like. The indirect substitution and no structural guarantees in if() conditions are a major trouble in efficient AST and interpreter design. It almost forces you to resolve the condition syntax at execution time instead of parsing time. Which is a cost paid over and over. Plus the indirect reference forces you to always needing a backup path if variable interning is ever implemented to reduce lookup overhead. Talking about that. CMake has dynamic scope instead of lexical scoping. In most programming languages, you cannot use a variable before it is defined in a loop.

# C++ syntax errors using variables prior to their definition

for(int i=0 ; i<5 ; i++) {

std::cout << str << std::endl; // error: name not defined

std::string str = std::to_string(i);

}

Or at a bare minimum the interpreter gives you a null. As the variable's life end at the bottom of the loop body.

-- Lua gives you nil if variable lifetime has not begun yet

for i=1,5 do

print(str) -- print: nil always

local str = i.tostring()

end

However, CMake decides that variable lives within the entire looping context.

# cmake_loop_dynamic_lifetime.cmake

# To CMake - variable lives within the entire loop's lifetime

foreach(i RANGE 5)

message(STATUS ${str}) # prints what str is last set to

set(str ${i})

endforeach()

Running it prints

❯ cmake -P cmake_loop_dynamic_lifetime.cmake

--

-- 0

-- 1

-- 2

-- 3

-- 4

This is a major pain in interpreter design that is prevents you (beyond simple cases even if you try) to directly bind variables to variable slots (optimization to reduce lookup overhead). Worse, CMake has PARENT_SCOPE that sets the variable not in the current scope, but in the parent's scope. You can't even just look at the current scope anymore, you need to hold a history of the variable and decide where to insert it.

# cmake_parent_scope_set.cmake

function (func)

set(MY_VAR "1" PARENT_SCOPE)

endfunction()

func()

message(STATUS ${MY_VAR}) # print: 1

Ofc, this is how the language works.

❯ cmake -P cmake_parent_scope_set.cmake

-- 1

The language does what it was designed to and people uses it. But hopefully demonstrate how weird and unconventional the CMake language is. And how that fact makes designing a fast interpreter difficult.

The CMake core engine

This is the actual cmake executable that you run. It does a lot of things. But mostly

- Read in CMakeLists.txt

- Parse and interpret the CMake language

- Gather build information, resolve dependencies, propagate properties to dependent targets

- Generate build files for the build system

Think it has a compiler. cmake reads in CMakeLists.txt -> generate build graph -> spits out a build system that can be used to build the project.

CMake modules (and config files)

CMake ships it's own list of well-known software packages and essentially "how do CMake look for them" information for stuff like OpenSSL, OpenCL, SDL, etc.. Modern CMake asks libraries to generate these information themselves via configure_package_config_file() and put them into a centralized location so CMake can find them. Either case, these files comes with heavy baggage of how CMake works. Some existed so early on that they still use the old if(COND) ... endif(COND) syntax and relied on weird CMake behaviour to function.

Kiln

Kiln is a completely new implementation of the CMake core engine in C++23. It has it's own CMake parser, interpreter, dependency resolution as well AUTOMOC/UIC/RCC support to compile Qt applications. Well, enough for me to consider useful at least.

The CMake interpreter

Writing an efficient CMake engine is hard for reasons described above. And Kiln despite me (and Claude's) best efforts are bounded by the constraints of CMake. I don't exactly know how CMake interpreters CMake -- suspecting they do string replacement then parsing for each line/statement. Kiln does the traditional way of pre-parsing the input source into AST. But keeps enough information around that it can handle string elision causing syntactical changes. We will discuss that later.

Despite the difficulty to make interpreting CMake efficient, there's a lot of common sense optimizations that still applies. Funny enough, after rounds of optimizations, the interpreter overhead almost never matters. Almost being the keyword here. Most CMake commands does so much work, command parsing, variable expansion, internal state construction, that the command itself is often where the bottleneck lies.

And I so mean common sense, use std::string_view, knowing ::tolower() is locale dependent and does more than ASCII, std::from_char() is the non-throwing and less bloated version of sto*, use transparent hashes for your hashmap to prevent string construction. I recall the initial Kiln (still called dmake at the time) was about twice as fast as CMake. And with the basic optimizations.. about 4 times or so. Afterwards the fun optimizations begin:

Variable scoping is a problem. The naive solution is to spawn a stack frame for each scope created, link them up and when variable lookup is needed, traverse the stack frame to find the variable. This is expensive. Even more so because a lot of variable references are to the built-in CMAKE_* ones, which sit at the top of the stack frame and would mean a traversal of the entire linked list. I don't exactly know what's the proper name for it, but I (not Claude, I did the designing) ended up designing ShadowMap. Essentially unordered_map<string. vector<string>> to hold all variables, plus some helpers to handle metadata. Insertion and lookup is just shadow_map[variable_name].back() and shadow_map[variable_name].push_back(). Better, because references to unordered_map values are stable (per C++ standard), the enables some level of interning of variables if that name is guaranteed to be stable at runtime. It is not as efficient an offset into a flat array, but 2 or 3 levels of pointer chasing is still better than linked list traversal.

Pesky if conditions

String elision and expansion is a real pain in condition handling - there's always the chance that someone will, for some reason, decided the expression only works because of runtime evaluation. There's no save for that. The following terrible code must work.

set(VAR1 "AND")

if(TRUE ${VAR1} FALSE)

message("It works!")

endif()

That rules out pre-parsing the condition and forces Kiln to do runtime evaluation -- so I thought too. Until I realize most real world code are well behaving. The most common patterns are if(VAR1 STREQUAL "SOME_STR") and if(${VAR1} EQUALS "SOME_STR"). The former is safe to pre-parse, the latter may need runtime evaluation, but most of the time not. The overall execution speed would benefit by the parser speculating that, in fact the programmer is smart and the variable did not elide or expand. So preparsing helps and reevaluation is only needed if the variable does elide/expand at runtime.

CMake if() has a few places that will bite you.

AND and OR have equal precedence, evaluated left-to-right. Not what you'd expect coming from basically any other language. if(A OR B AND C) is (A OR B) AND C, not A OR (B AND C). I found this out because Kiln had a bug where I implemented AND with higher precedence the way every language does, and it was wrong. CMake's documentation does say this, but it's easy to miss because it's so against intuition.

if(MYVAR) and if(${MYVAR}) look equivalent. They mostly are, but not always.

set(MYVAR "hello")

if(MYVAR) # true

if(${MYVAR}) # false

if(MYVAR) looks up the variable and checks if the value is falsy. if(${MYVAR}) expands first to the bare string "hello", then tries to look up a variable named "hello", which doesn't exist. So it's false. They only agree when the value is a boolean, a number, or coincidentally the name of another existing variable.

Single-character booleans: Y is truthy, N is falsy. So if a variable ends up holding "N" -- say, from parsing some output -- if(MYVAR) silently returns false. Fun to debug.

DEFINED looks like a reserved keyword. It is, but only contextually. if(DEFINED MYVAR) works. if(DEFINED) alone doesn't check if DEFINED is defined -- it falls through and looks up a variable named DEFINED instead. Same for EXISTS, TARGET, and all the unary operators. They're only keywords when in keyword position, otherwise they're just strings. And they're case-sensitive, unlike the boolean constants. if(defined MYVAR) lowercase is an error, not a silent wrong result.

You'd expect CMake to evaluate if() with a parser -- be it recursive descent, Pratt, something normal. It doesn't. CMake stores the condition as a linked list of tokens and runs reduction passes over it, each pass scanning left-to-right and collapsing patterns in-place. Parentheses first, then unary predicates like EXISTS and DEFINED, then binary comparisons, then NOT, and finally AND and OR together in one pass. Each matched pattern -- say A STREQUAL B -- gets replaced with a literal "0" or "1" in the list, and the consumed tokens are erased. Repeat until the list stops shrinking, then move to the next pass. At the end there should be exactly one token left.

AND and OR being equal precedence falls out naturally from this: they're handled in the same pass, so whichever triplet appears first left-to-right gets reduced first. There's no grammar to get wrong. The "keywords are positional not reserved" property also falls out naturally -- a keyword is only recognised when the scanner is explicitly looking for one at that position in that pass, so DEFINED appearing in the wrong place just gets left alone for a later pass to deal with (or error on at the end).

It's a strange design but it works, and it's surprisingly hard to implement incorrectly. Kiln uses recursive descent, which is more conventional but meant I had to explicitly flatten AND and OR to the same precedence level -- which I do get wrong initially.

Aggressive caching

One major factor to CMake's slowness is all the try_compile and try_run tests it performs to determine compiler and system capabilities. CMake always runs them unconventionally. Why? CMake already introduces more aggressive caching bugs via CMakeCache.txt caching find_* results. Why not just cache the results on disk and avoid compiling unnecessarily? Though it has to be done very carefully to avoid stale data. To the same degree an actual build system should track artifacts and dependencies, timestamp on all headers, flags and compiler version... So just reuse the build system to cache these results! Kiln already has to track these results anyway.

Turns out pkg-config is another major source of slowness once compilation cache is used. For better or worse, pkg_check_modules() - what CMake uses to import packages from pkg-config, and is implemented as a CMake module - invokes pkg-config several times per package and each invocation is about 20ms on my machine. Which kills the entire "just configure everytime" approach I dreamed. I am all for software correctness and no surprises. But the is a deal breaker for the entire project. And no real solution because pkg-config is it's own thing can I have no control over and shimming pkg_check_modules() is also a terrible problem to solve. Matching the exact behaviour and all the quirks is a daunting task.

"You know.. the behaviour of pkg-config is actually well known and stable" .. intrusive thought I know but I decided to give it a shot. We know what path PkgConfig cares about. We know what variables pkg-config uses. We can cache the result from pkg-config, keyed on the package name, path mtime and other things. This way using data from pkg-config has near 0 cost the second run and we are still 99.9% right. Good enough. I ended up doing the same for certain other software queries on critical path of configuration too. Works wonders.

Through benchmarking, it turns out blobbing is also a major bottleneck on large projects. For 2 reasons. First, globs are naturally slow and can be cached. File creation and deletion updates directory mtime thus the cache can be gaurenteed to be valid if we know the mtime does not change. For recursive globs, the same thing holds true and the only thing that changes is the need to recursivly validate subdirectory mtimes. And only affected subdirectories need to be re-globbed. Secondly, globs in CMake by default does not list directories. The LIST_DIRECTORIES option controls if directories should be included in glob. Turns out the only reason I see projects would use LIST_DIRECTORIES is because they want the directories. Usually they then loop through the glob result and filter out the files. Each IS_DIRECTORY check is a separate filesystem stat call, which is slow. But that information is also already available from the glob result itself, that can also be cached and significantly speed up this class of operations.

Build

After interpreting the CMake source, Kiln has a collection of target that needs to be built. This is a solved problem for ages already. Look at target dependency graph, lower that into a per-artifact dependency graph, scan the file system using mtime to determine if an artifact needs to be rebuilt, rebuilds taint dependents and walk the dependency graph to build all artifacts in the correct order. The non-obvious fact is this. CMake does not check if a source file is available at configuration time, because that file might be generated somewhere down the CMakeLists interpretation run. Instead it does the scan at build system generation and only errors if it can't find the file as already existing or as a output of commands that generate it.

That last part is particularly annoying. Implied dependencies. Kiln have to actually look at what custom commands generates, resolves their paths, correctly accept or error out and wire up the build graph in the correct order. Some dependencies are hidden and has no way of knowing that they even exist. CMake "solves" (in air quotes) this by running all build tasks directly under the "all" target first before any of the compilation and linking can happen.

Otherwise Kiln does Ninja style parallel builds. Building targets does not depend on the dependencies to be fully built (just need the input to compilers are ready). Which honestly should be the default way back in 2015 already. The reason I still use Makefiles today is Ninja takes color away from compiling output.. without setting -DCMAKE_CXX_FLAGS="-fdiagnostics-color=always" manually which.. I just get Kiln do it automatically for me and make it an none issue.

Generator expressions

Generator expressions are the bane of my existence building Kiln. They look like $<KEYWORD:content> and they are everywhere in modern CMake. The critical thing to understand is: genex are not evaluated during configuration. They are plain strings at configuration time and only evaluated when converting targets into build commands. This has consequences that are not obvious at first.

The simplest genex is the conditional: $<1:value> returns "value", $<0:value> returns empty. $<CONFIG:Debug> returns "1" when building in Debug mode, "0" otherwise. These compose into the pattern you see everywhere:

target_compile_options(myapp PRIVATE

$<$<CONFIG:Debug>:-g>

$<$<CONFIG:Release>:-O3>

)

This reads as "if Debug, add -g; if Release, add -O3". The outer genex $<CONDITION:value> takes the inner genex as its condition. It's genex inception -- the "keyword" position itself contains a $<...> expression that gets evaluated first. Now. GenEx is evaluated per source, not per target. It's "generator expressions" after all not "target expressions". This is why the following works even if the target has both C and C++ sources:

set_target_properties(myapp PROPERTIES

COMPILE_FLAGS

$<$<COMPILE_LANGUAGE:CXX>:-std=c++17>

)

Each source file gets its own evaluation context with the appropriate language. A .c file sees $<COMPILE_LANGUAGE:CXX> evaluate to "0", a .cpp file sees "1". This also makes caching genex evaluation annoying -- you can't just evaluate once per target, you need to consider each source.

Because genex are plain strings during configuration, they interact with list operations in unfortunate ways:

# genex_list_interaction.cmake

set(FLAGS "$<1:-Wall;-Wextra>")

list(LENGTH FLAGS len)

message("Length: ${len}")

message("Element 0: ${FLAGS}")

❯ cmake -P genex_list_interaction.cmake

Length: 2

Element 0: $<1:-Wall

The list has 2 elements: $<1:-Wall and -Wextra>. The semicolon inside the genex is treated as a list separator because at configuration time, CMake doesn't know or care that it's inside a genex. The genex gets torn apart into fragments that are meaningless on their own. Kiln has to deal with this. When converting target properties into actual compiler flags, Kiln scans the list elements and reassembles fragments by tracking angle bracket depth. If an element has unbalanced $< without matching >, it gets joined with subsequent elements (inserting semicolons back) until the brackets balance. It works but it's the kind of logic you write with a sinking feeling.

So how do you put a semicolon inside a genex? You use $<SEMICOLON>, a genex that evaluates to a literal ;. Similarly $<COMMA> evaluates to , because commas are argument separators inside genex, $<ANGLE-R> evaluates to > because > closes the genex, and $<QUOTE> evaluates to ". These are not special syntax. They are regular genex that happen to evaluate to a single character. The parser treats them identically to $<CONFIG:Debug> or any other genex.

$<ANGLE-R> deserves special attention. Say you want to conditionally output >> as a compile flag. You can't write $<$<CONFIG:Debug>:->> because the parser sees the first > as closing the outer genex. So you write $<$<CONFIG:Debug>:$<ANGLE-R>$<ANGLE-R>>. Projects (looking at you Qt) abuse $<ANGLE-R> to compose complex genex where > appears in the output. Every time I see it in the wild I have to mentally parse the nesting by hand.

Inside a genex, commas separate arguments -- but only at the top nesting level. $<IF:cond,true_val,false_val> is the ternary form with three comma-separated arguments. But $<IF:$<STREQUAL:$<CONFIG>,Debug>,yes,no> works because the commas inside $<STREQUAL:...> are at a deeper nesting level and aren't treated as separators for the outer $<IF>. The parser tracks < and > depth while scanning for commas. Comma at depth 0 = separator. Comma at depth > 0 = literal. This is the same bracket-balancing logic used for the > that closes the genex itself, and it has to be correct or everything falls apart.

Here's a subtle one that bit me. The way a genex result is split into compiler flags depends on whether the original expression was a "pure" genex or mixed with literal text:

target_compile_options(myapp PRIVATE

$<1:-Wall -Wextra> # pure genex -> split by whitespace → ["-Wall", "-Wextra"]

"$<1:-Wall -Wextra>" # quoted -> kept as single string "-Wall -Wextra"

prefix$<1:-Wall> # mixed -> kept as single string "prefix-Wall"

)

An unquoted argument that is entirely a genex gets its result split the same way any unquoted argument would. A quoted argument or one with literal text mixed in stays as one element. Kiln tracks whether each list element is a "pure genex" during property evaluation to replicate this. Get it wrong and you either pass a single mega-flag to the compiler or split something that shouldn't be split.

Some genex can't be resolved during property resolution. When Kiln resolves transitive target properties -- propagating INTERFACE properties from dependencies -- it encounters genex like $<COMPILE_LANGUAGE:CXX> but doesn't know the source language yet. Those get deferred: left as-is in the property value and only evaluated later when generating the actual compile command for each source file. This is why you sometimes see genex "leak" through property resolution. It's intentional. The alternative would be to duplicate the entire property set per language, which is wasteful and fragile. $<TARGET_PROPERTY:tgt,prop> is another fun one. It looks up a property on a target, but that property might itself contain genex. So the evaluator has to recursively parse and evaluate the result. Genex all the way down.

$<LINK_ONLY:lib> evaluates to just "lib" -- same as if the wrapper wasn't there. But it carries metadata: this dependency should only be linked, not have its INTERFACE properties propagated to the consumer. It's a genex that affects build semantics without changing its string value. Kiln handles this by returning a struct from link library evaluation that carries a link_only flag alongside the evaluated string. The flag is checked during transitive property resolution to skip INTERFACE propagation for that dependency.

target_include_directories(mylib PUBLIC

$<BUILD_INTERFACE:${CMAKE_CURRENT_SOURCE_DIR}/include>

$<INSTALL_INTERFACE:include>

)

During build, $<BUILD_INTERFACE:...> returns the path and $<INSTALL_INTERFACE:...> returns empty. During install, the reverse. Same property, two different results depending on phase. This is how CMake handles the common pattern of "headers are here during build but there during install". Every genex evaluation in Kiln carries a phase context (BUILD or INSTALL) that gates these expressions.

Now, the parser has to figure out which > closes which genex. You might assume it's simple bracket matching -- every < pairs with a >. It's not. Only $< counts as an opening delimiter. A bare < in genex content is just a literal character. So $<BOOL:a<b> is perfectly valid -- the < after a is content, the > closes the genex, and the result is 1 (because a<b is a non-empty string, therefore truthy). Kiln initially got this wrong and counted all < characters for depth, which meant $<BOOL:a<b> would error with "unmatched <". CMake doesn't care about bare < at all.

What CMake does track is nested $< pairs. In $<$<CONFIG:Debug>:-g>, the $< before CONFIG increments the nesting depth, the > after Debug decrements it, and the final > closes the outer genex. The parser knows that $<CONFIG:Debug> is a complete inner genex because it matched $< with >, and the : between CONFIG and Debug is at depth 1, so it's not the separator for the outer genex.

$<ANGLE-R> works because the parser reads the keyword ANGLE-R, hits > at depth 0 as the closer, recognizes it as a constant genex, and returns a literal >. But what if you try to be clever and write $<ANGLE-R$<ANGLE-R>>? You might expect this to mean "ANGLE-R" concatenated with the result of $<ANGLE-R> (which is >), giving you the keyword ANGLE-R>. That's not what happens. The $< before the second ANGLE-R increments depth, the > after it decrements depth back to 0, and the trailing > closes the outer genex. The entire keyword is ANGLE-R$<ANGLE-R> as one blob. CMake looks it up, finds nothing, and errors with "Expression did not evaluate to a known generator expression". Genex are not evaluated during parsing -- you can't compose a keyword out of other genex results.

$<ANGLE-R> is the only reason you can produce genex-like strings as output via genex composition. A bare $ is just a literal character -- it's only $< together that starts a genex. But that means any $ followed by < in the output will be interpreted as the start of a new genex, and there's no way to escape that sequence.

Getting all of this right in Kiln was a process of discovering what CMake actually does versus what the documentation implies. Tons of guesswork. The documentation describes the syntax but not the parsing rules. The only reliable reference is CMake's behavior itself, and sometimes that behavior is surprising.

ExternalProject/FetchContent support

I think this was supposed to be CMake's idea to solve the C and C++ dependency management problem on everything-not-linux/bsd. The execution is so weird that adoption was hindered. CMake because being a meta build system. The only solution to get an external project going is by launching a new CMake instance at build time to configure and build it there. And the parent CMake process has no idea where artifacts are located when the build system is generated. If some expectation doesn't line up (despite compiling correctly).. you figure out at link time when then linker complains file missing. Then it becomes a 3 hour debugging session on figuring out where it does wrong.

Being a build system fixes this. The process is still alive and can spawn a new interpreter to configure the external project. And after configuring, the build system can then just yank the build graph from the external project and merge it into its own build graph and revalidate the entire thing. Early error detection. This manifests as so.

[ 1/258] Configuring jsoncpp

[ 2/280] Ready jsoncpp <- injected into main graph

[ 3/280] Building src/main.cpp

[ 4/280] Building jsoncpp/src/lib_json/json_reader.cpp

...

Merging the external project's build graph also benefits build speed. The way CMake does it builds external projects one by one. Parallelism is limited within each project. Merging allows Kiln to do Ninja style parallel builds across all projects.

This however needs a lot of hacks to work. Sometimes people use ExternalProject_Add() with custom build commands and invoke make directly (because that's CMake's default behavior). Kiln needs to detect these sort of exceptions and handle them gracefully.

Profiling

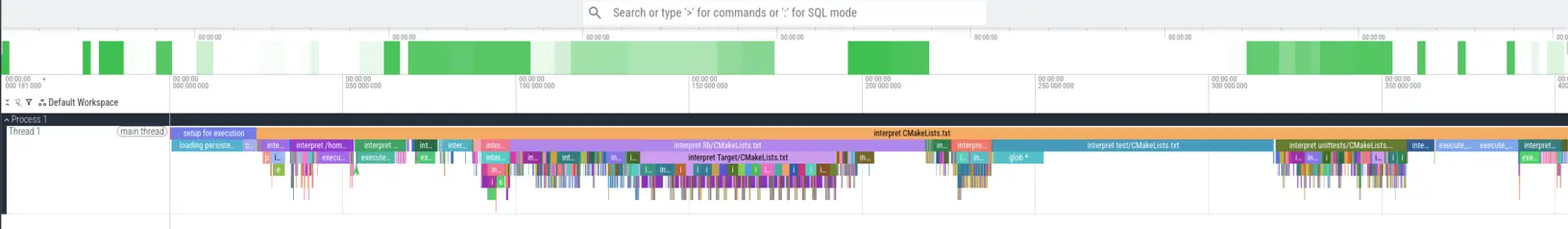

Kiln runs the CMake code every time, the speed is critical for user experience. The --profile flag in Kiln outputs a Chrome tracing file that can be opened in Perfetto to see where time is being spent during configuration and build. To reduce impact during interpretation, I made it so it only profiles the important stuff: running commands, compilation, including or executing a new CMake script, parsing CMake, etc.. The following picture shows the profile of my workstation configuring LLVM with cache.. doesn't even take a second.

Debugging CMake

This is more my need then user facing feature. Thing often go wrong and AIs though smart has limited context window. I am the one figuring out what is wrong end of the day. CMake has trace mode that Kiln does emulate. But that's difficult to read and not helpful most of the time. Really just trying to find the needle in a heystack. So two things, target introspection and a debugger.

kiln_dump_target_info(mytarget [AT_BUILD]) dumps what Kiln knows about a target either at the very moment or at graph generation time, which by then all property propagation is done and flags are resolved. For example what I get from drogon (a C++ web framework that I maintain, heavily truncated to keep the post clean):

[INFO] === Target: drogon ===

Type: STATIC_LIBRARY

Source Dir: /home/marty/Documents/drogon

Binary Dir: /home/marty/Documents/drogon/build/debug

Output Path: /home/marty/Documents/drogon/build/debug/libdrogon.a

Imported: no

--- Unresolved Properties ---

INCLUDE_DIRECTORIES:

PUBLIC:

.....

--- Resolved Properties (after dependency resolution) ---

INCLUDE_DIRECTORIES (for building this target):

- /home/marty/Documents/drogon/trantor

- /home/marty/Documents/drogon/lib/inc

- ...

17-layer denoising CNN → INT8 quantized → tensor hardware execution on RISC-V Erbium

SYSTEM_INCLUDE_DIRECTORIES (for building this target):

- /usr/include

- /usr/include/uuid

- /usr/include/postgresql/server

- /usr/include/mysql

COMPILE_DEFINITIONS (for building this target):

- USE_OSSP_UUID=0

- USE_BROTLI

- HAS_MYSQL_OPTIONSV

- USE_REDIS

- HAS_YAML_CPP

LINK_LIBRARIES (for building this target):

- /home/marty/Documents/drogon/build/debug/trantor/libtrantor.a

- /usr/lib/libssl.so.3

- /usr/lib/libcrypto.so.3

- /usr/lib/libcares.so.2.19.5

- ....

- /usr/lib/libz.so.1.3.2

--- Resolved Interface Properties (propagated to dependents) ---

INCLUDE_DIRECTORIES (for dependents):

- /home/marty/Documents/drogon/trantor

- /home/marty/Documents/drogon/lib/inc

- ....

SYSTEM_INCLUDE_DIRECTORIES (for dependents):

- /usr/include

COMPILE_DEFINITIONS (for dependents):

- HAS_YAML_CPP

LINK_LIBRARIES (for dependents):

- /home/marty/Documents/drogon/build/debug/trantor/libtrantor.a

- /usr/lib/libssl.so.3

- /usr/lib/libcrypto.so.3

- /usr/lib/libcares.so.2.19.5

- pthread

- /usr/lib/libjsoncpp.so.26

- yaml-cpp

I don't know why this is not a standard CMake feature already. This would have saved me weeks across my career.

There's also the interactive CMake debugger with the --debug flag. Which drops you into a GDB-style debugger where you can inspect variables, step through code and break on line, function or message printed.

❯ kiln -C ~/Documents/drogon --debugger

(kiln) > help

Commands:

break <line> (b) Set breakpoint at line in current file

break <file>:<line> (b) Set location breakpoint

break <command> (b) Break on command name

continue (c) Run until next breakpoint

step (s) Step to next command

next (n) Step over (stay at current call depth)

print <var> (p) Print variable value

backtrace (bt) Show call stack

list (l) Show source around current line/frame

frame [N] (f) Select stack frame N (show current if no arg)

up [N] Move up N stack frames (default: 1)

down [N] Move down N stack frames (default: 1)

info variables List all visible variables

info breakpoints List all breakpoints

break-on-message <p> (bm) Break when message matches pattern

watch <var> (w) Break when variable changes

delete <n> (d) Delete breakpoint by ID

quit (q) Exit kiln

help (h) Show this help

(kiln) > bm Trantor

Will break on messages matching "Trantor"

(kiln) > c

[STATUS] compiler: GNU

-- Checking Performing Test COMPILER_HAS_HIDDEN_VISIBILITY

-- Checking Performing Test COMPILER_HAS_HIDDEN_VISIBILITY - Success

-- Checking Performing Test COMPILER_HAS_HIDDEN_INLINE_VISIBILITY

-- Checking Performing Test COMPILER_HAS_HIDDEN_INLINE_VISIBILITY - Success

-- Checking Performing Test COMPILER_HAS_DEPRECATED_ATTR

-- Checking Performing Test COMPILER_HAS_DEPRECATED_ATTR - Success

-- Checking Looking for C++ include any

-- Checking Looking for C++ include any - found

-- Checking Looking for C++ include string_view

-- Checking Looking for C++ include string_view - found

-- Checking Looking for C++ include coroutine

-- Checking Looking for C++ include coroutine - not found

[STATUS] Found module: /usr/share/cmake/Modules/FindOpenSSL.cmake

[STATUS] Found OpenSSL: /usr/lib/libcrypto.so.3 (found version "3.6.1")

[STATUS] Trantor using SSL library: OpenSSL

(kiln) Break on message matching "Trantor": Trantor using SSL library: OpenSSL

(kiln) /home/marty/Documents/drogon/trantor/CMakeLists.txt:234 message(STATUS "Trantor using SSL library: ${TRANTOR_TLS_PROVIDER}")

(kiln) > l

229 trantor/utils/crypto/sha1.h

230 trantor/utils/crypto/sha256.h

231 )

232 endif()

233

> 234 message(STATUS "Trantor using SSL library: ${TRANTOR_TLS_PROVIDER}")

235 target_compile_definitions(${PROJECT_NAME} PRIVATE TRANTOR_TLS_PROVIDER=${TRANTOR_TLS_PROVIDER})

236

237 set(HAVE_SPDLOG NO)

238 if(USE_SPDLOG)

239 find_package(spdlog CONFIG)

(kiln) > p TRANTOR_TLS_PROVIDER

TRANTOR_TLS_PROVIDER = "OpenSSL"

CMake has DAP support and debugging is possible over VSCode. But I am never a fan of that and integration it seems always flaky at best. Console interactive debugging to me is way cooler and faster.

Performance

What does this all add up to? Kiln comes with built-in benchmarks under the benchmark/ directory that implements classic algorithms to test pure interperter speed. On my Intel 245K machine, it goes as follows (the --no-sys-init is needed in Kiln as Kiln unconventionally populate compiler information even in script mode) against CMake 4.2.3 (the CMake version and build that Arch Linux ships at the time):

8queens.cmake (Solving the 8 queens problem)

❯ hyperfine --warmup=3 'cmake -P benchmark/8queens.cmake' 'build/release/kiln -P benchmark/8queens.cmake --no-sys-init'

Benchmark 1: cmake -P benchmark/8queens.cmake

Time (mean ± σ): 2.488 s ± 0.024 s [User: 2.407 s, System: 0.073 s]

Range (min … max): 2.425 s … 2.508 s 10 runs

Benchmark 2: build/release/kiln -P benchmark/8queens.cmake --no-sys-init

Time (mean ± σ): 182.4 ms ± 2.7 ms [User: 176.0 ms, System: 5.6 ms]

Range (min … max): 176.5 ms … 187.4 ms 16 runs

Summary

build/release/kiln -P benchmark/8queens.cmake --no-sys-init ran

13.64 ± 0.24 times faster than cmake -P benchmark/8queens.cmake

And Knight's tour (knights-tour.cmake)

❯ hyperfine --warmup=3 'cmake -DN=40 -P benchmark/knights-tour.cmake' 'build/release/kiln -DN=40 -P benchmark/knights-tour.cmake --no-sys-init'

Benchmark 1: cmake -DN=40 -P benchmark/knights-tour.cmake

Time (mean ± σ): 2.289 s ± 0.054 s [User: 2.208 s, System: 0.073 s]

Range (min … max): 2.248 s … 2.401 s 10 runs

Benchmark 2: build/release/kiln -DN=40 -P benchmark/knights-tour.cmake --no-sys-init

Time (mean ± σ): 206.2 ms ± 59.3 ms [User: 198.2 ms, System: 6.9 ms]

Range (min … max): 163.3 ms … 312.7 ms 17 runs

Warning: Statistical outliers were detected. Consider re-running this benchmark on a quiet system without any interferences from other programs. It might help to use the '--warmup' or '--prepare' options.

Summary

build/release/kiln -DN=40 -P benchmark/knights-tour.cmake --no-sys-init ran

11.10 ± 3.20 times faster than cmake -DN=40 -P benchmark/knights-tour.cmake

Synthetic benchmarks are fun but does not represent real world workloads. Benchmarking real projects are more tricky as CMake always writes the build system to disk. But luckly CMake self reports configuration time. Say LLVM. Kiln is 3.8 ~ 20 times faster than cmake on this task, dependes on how you count it:

❯ pwd

/home/marty/Documents/not-my-projects/llvm-project/llvm/build

(With out CMakeCache.txt)

❯ cmake ..

...

-- Compiling and running to test HAVE_PTHREAD_AFFINITY

-- Performing Test HAVE_PTHREAD_AFFINITY -- success

-- Configuring done (16.8s)

-- Generating done (15.3s)

-- Build files have been written to: /home/marty/Documents/not-my-projects/llvm-project/llvm/build

(With warm CMakeCache.txt)

❯ cmake ..

...

-- Performing Test HAVE_STEADY_CLOCK -- success

-- Performing Test HAVE_PTHREAD_AFFINITY -- success

-- Configuring done (6.2s)

-- Generating done (8.4s)

-- Build files have been written to: /home/marty/Documents/not-my-projects/llvm-project/llvm/build

While if I run the standard release build on kiln:

❯ hyperfine --warmup=3 'build/relwithdebinfo/kiln -C ~/Documents/not-my-projects/llvm-project/llvm --config-only'

Benchmark 1: build/relwithdebinfo/kiln -C ~/Documents/not-my-projects/llvm-project/llvm --config-only

Time (mean ± σ): 1.598 s ± 0.077 s [User: 1.242 s, System: 0.304 s]

Range (min … max): 1.513 s … 1.757 s 10 runs

There the argument that Kiln is cheating by caching everything. True. But Kiln is doing it in a way that caching does not cause stale results. Which CMake demonstratly cannot do well. But point taken, Kiln has a --fresh flag that bypasses caches. Still 2x faster than CMake without cache, excluding generating of build systems.

❯ hyperfine --warmup=3 'build/relwithdebinfo/kiln -C ~/Documents/not-my-projects/llvm-project/llvm --config-only --fresh'

Benchmark 1: build/relwithdebinfo/kiln -C ~/Documents/not-my-projects/llvm-project/llvm --config-only --fresh

Time (mean ± σ): 7.465 s ± 0.629 s [User: 5.518 s, System: 1.869 s]

Range (min … max): 6.702 s … 8.880 s 10 runs

On my workstation (AMD 7950X3D) - total configuration time with cache is lower then 500ms.

❯ hyperfine 'release/kiln -C ~/Documents/not-my-projects/llvm-project/llvm --config-only'

Benchmark 1: release/kiln -C ~/Documents/not-my-projects/llvm-project/llvm --config-only

Time (mean ± σ): 454.2 ms ± 5.8 ms [User: 337.4 ms, System: 91.0 ms]

Range (min … max): 444.7 ms … 465.8 ms 10 runs

The performance difference not a LLVM-only thing. ZeroMQ also shows significant configuration performance improvements with Kiln at 60ms to configure.

❯ hyperfine --warmup=3 'build/relwithdebinfo/kiln -C ~/Documents/not-my-projects/libzmq --config-only'

Benchmark 1: build/relwithdebinfo/kiln -C ~/Documents/not-my-projects/libzmq --config-only

Time (mean ± σ): 60.6 ms ± 21.8 ms [User: 41.8 ms, System: 14.7 ms]

Range (min … max): 34.2 ms … 119.4 ms 85 runs

While CMake needs 5 on cold and 0.1 on hot.

❯ pwd

/home/marty/Documents/not-my-projects/libzmq/build

(cold)

❯ cmake ..

...

CMake Warning (dev) at tests/CMakeLists.txt:347 (message):

Test 'test_socket_options_fuzzer' is not known to CTest.

This warning is for project developers. Use -Wno-dev to suppress it.

-- Configuring done (5.0s)

-- Generating done (1.1s)

-- Build files have been written to: /home/marty/Documents/not-my-projects/libzmq/build

(hot)

❯ cmake ..

...

CMake Warning (dev) at tests/CMakeLists.txt:347 (message):

Test 'test_socket_options_fuzzer' is not known to CTest.

This warning is for project developers. Use -Wno-dev to suppress it.

-- Configuring done (0.1s)

-- Generating done (0.9s)

-- Build files have been written to: /home/marty/Documents/not-my-projects/libzmq/build

And an absurd workload of running LLMs (RWKV 0.1B) on CMake vs Kiln. No one should be using CMake itself to run a large language model. But Kiln is more then 20x faster and hope the point is served around this performance translates to some real world benefit.

Differential fuzzing

Differential fuzzing a full language is hard. Traditional fuzzing tools like libfuzzer tries to exploit bugs in a binary parser to either crash or hang. That is not what I a looking for. Kiln can hang if the code is actually an infinite loop. What I need to know is if the output differs between cmake and kiln for the same input. I am a believer of the 20/80 principle - 20 percent of work will deliver 80% of results. So I wrote a small Python script that is a loop that asks LLMs from different vendors to come up with edge case behaviors, run both cmake and kiln, compare the output, collect diverging cases, add the result to context and repeat. Even if LLMs are terrible at coming up with random ideas (inferred, link below). Fixes are applied and at some point they converged on not finding meaningful divergences. And I call that good enough.

Of course this does not create the best coverage of language compatibility. 20/80. But it is good enogh to get projects compiline. Then it is up to just trying every project, find the edge cases and repeatly fix them. Also Kiln runs CMake's modules to locate libraries in the system. It turns out this is good enough of a test for expected language compatibility - good enough to get Kiln Qt6Core compiling under it. The LLM driven fuzzing loop is higher in bugs-per-hour then trying random projects and see what break where. Which in turn helps getting more builtin CMake modules run correctly. Then time can be focused on the rest of the edge cases.

Finding a new catgory of bugs

CMake being the only implementation has lead to hidden behavior that Kiln can expose and can help fix. Turns out MariaDB contains a broken Generator Expression that no one noticed and broke Kiln when I first encountered it. Kiln has to accept it as-is becasue compatibility means compatibility. Armed with his knowledge, Kiln can show warnings about unbalanced generator expressions and help developers fixing them.

-- Checking Performing Test HAVE_VISIBILITY_HIDDEN - Success

warning: Unbalanced generator expression (missing closing '>')

--> /home/marty/Documents/not-my-projects/mariadb-server/cmake/install_macros.cmake:291:7

|

291 | $<$<BOOL:${VCPKG_INSTALLED_DIR}>:${VCPKG_INSTALLED_DIR}/${VCPKG_TARGET_TRIPLET}/bin

| ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

= note: Accepting as-is - CMake treats generator expressions as strings at parse time.

Unfortunately this only detects static genex that exist as parse time. If the genex is constructed at configure time, Kiln won't be able to detect it and wouldn't be able to help in early diagnostics.

Open questions

Some questions I have but have no good answer to. Not for a lack of trying.

How much language compatibility is enough?

Unlike C and C++, there is not formal spec of CMake. CMake is a definition-by-implementation thing. A bug in the CMake interpreter that gets popular enough becomes a feature. And the list of unintuitive CMake behaviours are very long. So much so I think the only CMake-compliant interpreter possible is CMake itself. Kiln can only do a best effort approximation. So question is.. how much compatibility is enough? Is 95% enough? 99%? Differential fuzzing gets you the obvious cases. But I don't remotely have enough compute to the full universe of projects. Some I don't even have access to.. those enterprise closed sourced ones. Should I even care about compatibility with those?

More importantly, where does the cost of compatibility goes vertical? Getting the first 80% is easy and with effort I got enough to compile real world large projects. How far should I go?

How far can you push a CMake interpreter?

For reasons explained earlier. CMake the language is difficult to build an interpreter for. Static analysis is near impossible. Interning can happen but limited. Where is the ceiling? Sub 1 second configuring LLVM ought to mean fast enough for most projects right? What do people actually need?

Even if we assume complexity is non issue today thanks to AI (which my opinion is it is still a problem, complexity means places for bugs to hide and infra is the last place we want bugs). Do I really want to spend days squeezing out 10% more interpreter speed if the majority of time will be GCC compiling stuff anyway? What should be the calculus?

Do we actually need policy?

Policy is CMake's way to introduce breaking changes but then allow users to revert temporarily without having to upgrade. Kiln is a clean slate at the engine level, do it make sense to inherit that debt?

In my experience there's only a few policies that actually makes a material difference. Which is trivial to manage. Or did I just not notice where all the projects that do depend on policies are hiding in?

What should C++ dependency management actually ought to be?

FetchContent is better than ExternalProject. But Cargo is better than FetchContent. With Kiln now owning the entire build graph, how far should dependency management go on in Kiln? The core dependency and build system is generic enough that is is forseable to slap on a different frontent. Meson, xmake, Bazel. Do we want Kiln be the mega all-in-one build system?

Also vcpkg and Conan exists. Are they the right answer? Or what we settled for because of what CMake and C++ were prior to this? Or is going full Go/Cargo the way and we invent kiln.toml?

Can one person maintain CMake compatibility?

CMake ships new features and their modules update every release. Kiln has to keep up. I can do automated testing across OS, distro and CMake versions to tell me what broke. But I still need to fix them myself (AIs yet to be able to do a lot of the tracing work).

Can I keep up with it? Is this kind of work sustainable?

Since it is 2026, do I just throw tokens and agents at the problem and iterate the entire GitHub worth of projects?

What's not working yet

Kiln is still a very young project and has a billion things not working, especially if you are not on Linux. Just to name a few

- Windows / MSVC support

- BSD, macOS support (macOS Frameworks is going to be a pain)

- Cross compilation

- Java, Fortran, ISPC, OBJC, CUDA/HIP, etc.. support

- CPack (completely ignored, though install-to-fs works)

- Unity builds

I have friends working on MSVC and FreeBSD support so that should happen in some time. But the rest depends on if anyone sends in patches or I have the time to work on it.