Benchmarking ET-SoC-1 Naive Matrix Multiplication Performance

I am currently working on the GGML backend for the ET-SoC-1. To answer the question of "how well does this chip actually perform?", I decided to benchmark the most basic FP32 matrix multiplication implementation using the matrix engine. The plan is the same as in my previous RK3588 matmul benchmarking attempt: gather a large amount of data first, then extract useful conclusions afterward. See the previous post:

Note that the kernel being benchmarked is the most basic naive implementation, so it is expected to be slow. But that is not the point. The goal is to understand how the chip behaves under real code, learn how to approach optimization, and inform future development. The idea is to go in blind, test broadly, and see what turns up.

Introduction to the ET-SoC-1 Matrix Engine

See the ET-SoC-1 PRM for reference:

Each Minion core on the ET-SoC-1 contains 2 threads, called harts. Each even-numbered hart (hart 0, 2, 4, ...) is connected to a matrix unit capable of 16x16 matrix multiplication with FP32, FP16, and INT8 support. Odd harts are not allowed to access the matrix unit; attempting to do so triggers a hardware exception. The one exception is tensor load operations, which odd harts can issue to enable additional asynchronous loads if desired. The matrix load instructions also support 2D tensor loading: you provide the tensor width and current position, and the hardware determines where each next row begins, avoiding awkward N-dimensional address calculations on the CPU.

Attempt 1

The following source code is the algorithm being benchmarked. I made a few mistakes and only noticed them afterward, such as not properly handling the fact that there are 34 shires, along with a few benchmarking code blunders. Even so, the preliminary data is still quite useful. The benchmarked kernel is a basic matrix multiplication implementation that follows GGML's MUL_MAT format. At a high level, it does the following:

- Load matrix block A

- Load matrix block B

- Wait for A and B to finish loading

- Perform matrix multiplication and accumulate the result

- Store the result

This basic kernel has some limitations, even though the hardware likely supports more:

- The N dimension is unrestricted and can be any value

- The M and K dimensions must be multiples of 16

- FP32 only

#define TILE 16 // hardware tile size

#define NUM_HARTS 1024

int entry_point(struct ggml_et_binary_params* params, void* env) {

uint64_t hart_id = get_hart_id();

// Only hart 0 have access to tensor engine

if (hart_id & 1) {

return 0;

}

uint64_t global_id = ((hart_id >> 6) << 5) + ((hart_id >> 1) & 0x1F);

// ===== Dimensions =====

const int64_t K = params->src0.ne[0];

const int64_t M = params->src0.ne[1];

const int64_t N = params->src1.ne[1];

const int64_t ne2_0 = params->src0.ne[2];

const int64_t ne3_0 = params->src0.ne[3];

const int64_t ne2_1 = params->src1.ne[2];

const int64_t ne3_1 = params->src1.ne[3];

// ===== Byte strides =====

const int64_t nb1_0 = params->src0.nb[1];

const int64_t nb2_0 = params->src0.nb[2];

const int64_t nb3_0 = params->src0.nb[3];

const int64_t nb1_1 = params->src1.nb[1];

const int64_t nb2_1 = params->src1.nb[2];

const int64_t nb3_1 = params->src1.nb[3];

const int64_t nb1_d = params->dst.nb[1];

const int64_t nb2_d = params->dst.nb[2];

const int64_t nb3_d = params->dst.nb[3];

const char* src0_base = (const char*)params->src0.data;

const char* src1_base = (const char*)params->src1.data;

char* dst_base = (char*)params->dst.data;

setup_cache_scp();

CLEAR_TENSOR_ERROR;

// ===== Tile grid =====

const int64_t m_tiles = M / TILE;

const int64_t n_tiles = (N + TILE - 1) / TILE; // ceil for N edge

const int64_t tiles_per_batch = m_tiles * n_tiles;

const int64_t batch_count = ne2_1 * ne3_1;

const int64_t total_tiles = batch_count * tiles_per_batch;

const int64_t r2 = ne2_1 / ne2_0;

const int64_t r3 = ne3_1 / ne3_0;

for (int64_t tile = global_id; tile < total_tiles; tile += NUM_HARTS) {

const int64_t batch_idx = tile / tiles_per_batch;

const int64_t tile_in_batch = tile % tiles_per_batch;

const int64_t nb_idx = tile_in_batch / m_tiles;

const int64_t mb_idx = tile_in_batch % m_tiles;

const int64_t i3 = batch_idx / ne2_1;

const int64_t i2 = batch_idx % ne2_1;

const int64_t i2_0 = i2 / r2;

const int64_t i3_0 = i3 / r3;

const char* src0_batch = src0_base + i3_0 * nb3_0 + i2_0 * nb2_0;

const char* src1_batch = src1_base + i3 * nb3_1 + i2 * nb2_1;

char* dst_batch = dst_base + i3 * nb3_d + i2 * nb2_d;

const int64_t mb = mb_idx * TILE;

const int64_t nb = nb_idx * TILE;

// N edge: last tile may have fewer than 16 rows

const int64_t n_cur = (nb + TILE <= N) ? TILE : (N - nb);

// Accumulate across K (always full 16-wide chunks)

for (int64_t kb = 0; kb < K; kb += TILE) {

// A = src1[nb:nb+n_cur-1][kb:kb+15] -> SCP 0:n_cur-1

tensor_load(

false, false,

0, // SCP line 0

0, // plain load

0,

(uint64_t)(src1_batch + nb * nb1_1 + kb * sizeof(float)),

0,

n_cur - 1, // N edge

(uint64_t)nb1_1,

0

);

// B = transpose(src0[mb:mb+15][kb:kb+15]) -> SCP 16:31

tensor_load(

false, false,

TILE, // SCP line 16

7, // 111 -> TensorLoadTranspose32

0,

(uint64_t)(src0_batch + mb * nb1_0 + kb * sizeof(float)),

0,

TILE - 1, // always 16 K elements

(uint64_t)nb1_0,

1

);

tensor_wait(TENSOR_LOAD_WAIT_0);

tensor_wait(TENSOR_LOAD_WAIT_1);

tensor_fma(

false,

3, // BCOLS=3 -> 16 M columns (always full. calculated as `4 * BCOLS + 4`)

n_cur - 1, // AROWS: N edge

TILE - 1, // ACOLS=15 (K always full tile)

0,

false, false, false,

false,

TILE, // BSTART=16

0, // ASTART=0

0, // TensorFMA32

(kb == 0) // first pass: overwrite; else: accumulate

);

// XXX: tensor_fma outputs to f0~f31 register. It is, in theory, possible to carve out

// some space on the stack and use that as a way to perform tiled MM. But that is really

// difficult, not tried and not what the PRM wants you to do (it recommends latency hiding)

// XXX: This also means that if the compiler tried to carry any FP value across tensor_fma

// without spilling - it breaks.

tensor_wait(TENSOR_FMA_WAIT);

}

// Store n_cur rows x 64 bytes

tensor_store(

0, // STEP=0

0, // FREG=0

3, // SIZE=3 -> 64B/row (16 * SIZE + 16)

n_cur - 1, // N edge

(uint64_t)(dst_batch + nb * nb1_d + mb * sizeof(float)),

0,

(uint64_t)nb1_d

);

tensor_wait(TENSOR_STORE_WAIT);

}

FENCE; // XXX: Do we need this?

return 0;

}

The benchmarking methodology is roughly as follows: modify llama.cpp's test-backend-ops performance testing capability to generate a large MUL_MAT performance test suite. This scans common points such as square matrices, common M/K/N values (slightly botched here; it should include 16 as an explicit point instead of using 16 + 128 * i), non-aligned N values, and random sample points along the M/K/N axes to find potential performance pitfalls.

auto add_point = [&](int m, int k, int n) {

auto key = mkn_t{m, k, n};

if (seen.insert(key).second) {

test_cases.emplace_back(new test_mul_mat(GGML_TYPE_F32, GGML_TYPE_F32, m, n, k, {1, 1}, {1, 1}));

}

};

// Phase A: fine square M=K=N, step 16

for (int s = 16; s <= 8192; s += 16) {

add_point(s, s, s);

}

// Phase B: 2D M x K grids at N in {1,16,64,128,256,1024,4096}, step 128

for (int n : {1, 16, 64, 128, 256, 1024, 4096}) {

for (int m = 16; m <= 8192; m += 128) {

for (int k = 16; k <= 8192; k += 128) {

add_point(m, k, n);

}

// ensure endpoint 8192

add_point(m, 8192, n);

}

// ensure m endpoint 8192

for (int k = 16; k <= 8192; k += 128) {

add_point(8192, k, n);

}

add_point(8192, 8192, n);

}

// Phase C: fine N sweeps at 10 (M,K) pairs, step 16

std::vector<std::pair<int,int>> mk_pairs = {

{128, 128}, {256, 256}, {512, 512}, {1024, 1024}, {2048, 2048},

{4096, 4096}, {128, 4096}, {4096, 128}, {1024, 4096}, {4096, 1024}

};

for (auto [m, k] : mk_pairs) {

for (int n = 1; n <= 4096; n += 16) {

add_point(m, k, n);

}

}

// Phase D: random fill on known slices (~6000 points)

std::mt19937 rng(42);

auto rand_dim = [&](int lo, int hi, int step) -> int {

int n_steps = (hi - lo) / step;

return lo + (int)(rng() % (n_steps + 1)) * step;

};

// Grid N values and some fixed M,K values for slicing

std::vector<int> grid_ns = {1, 16, 64, 128, 256, 1024, 4096};

for (int i = 0; i < 6000; i++) {

int slice_type = rng() % 3;

if (slice_type == 0) {

int n = grid_ns[rng() % grid_ns.size()];

int m = rand_dim(16, 8192, 16);

int k = rand_dim(16, 8192, 16);

add_point(m, k, n);

} else if (slice_type == 1) {

int m = rand_dim(16, 8192, 128);

int k = rand_dim(16, 8192, 16);

int n = rand_dim(1, 4096, 16);

add_point(m, k, n);

} else {

int k = rand_dim(16, 8192, 128);

int m = rand_dim(16, 8192, 16);

int n = rand_dim(1, 4096, 16);

add_point(m, k, n);

}

}

The benchmarking run heuristics were also changed from llama.cpp's defaults. By default, llama.cpp tries to either run 100 GFLOP or target 1 second of estimated runtime. That is fine for its use case, but truly memory-bound operations would take forever under my benchmark coverage and push the total runtime past 24 hours. For compute-dense operations, the 100 GFLOP target works, but it introduces more noise than I want. So the run heuristics were changed to:

- Attempt to do at least 100 GFLOP

- Attempt to run for at least 1 second

- But if reaching 100 GFLOP would require more than an estimated 1.5 seconds

- Aim for

geometric_mean(1.5s, estimated_time_100gflop)seconds instead - With a minimum of 5 runs

This heuristic is far from perfect, but it removes enough noise to make the results more useful.

Results

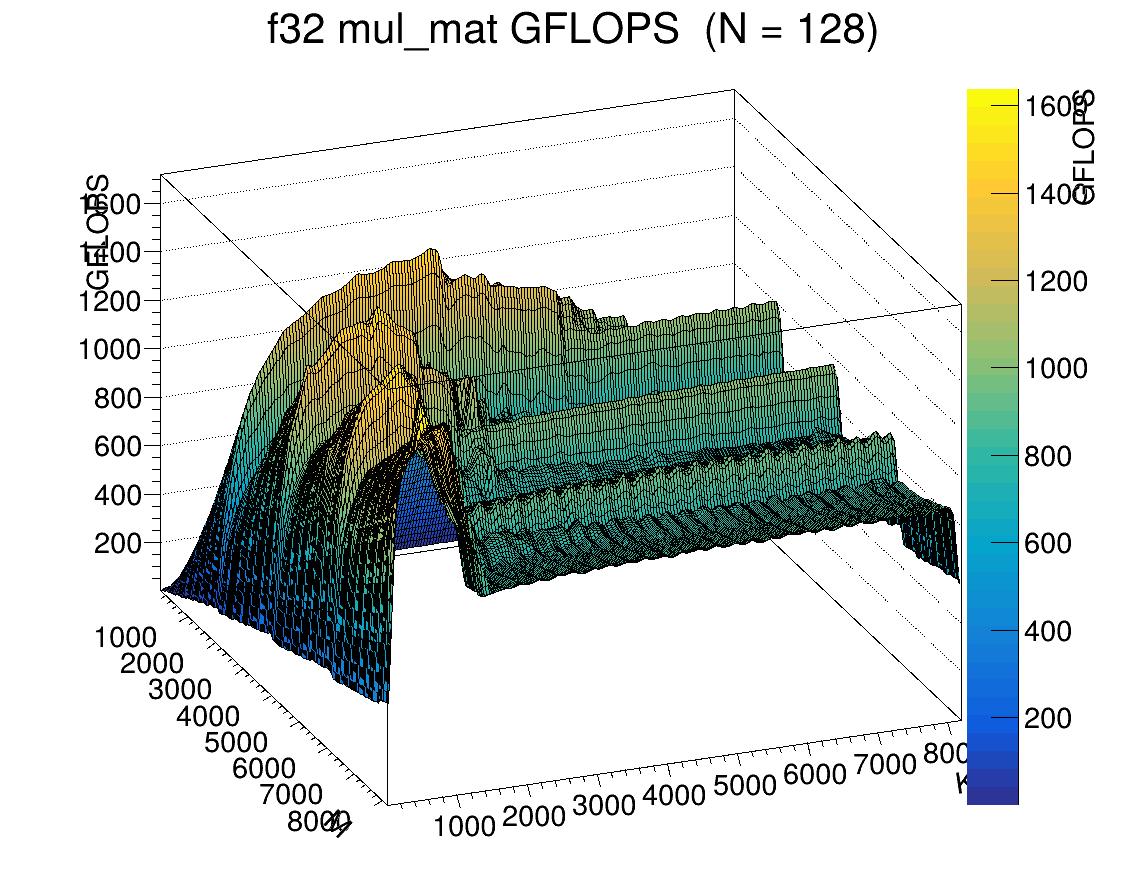

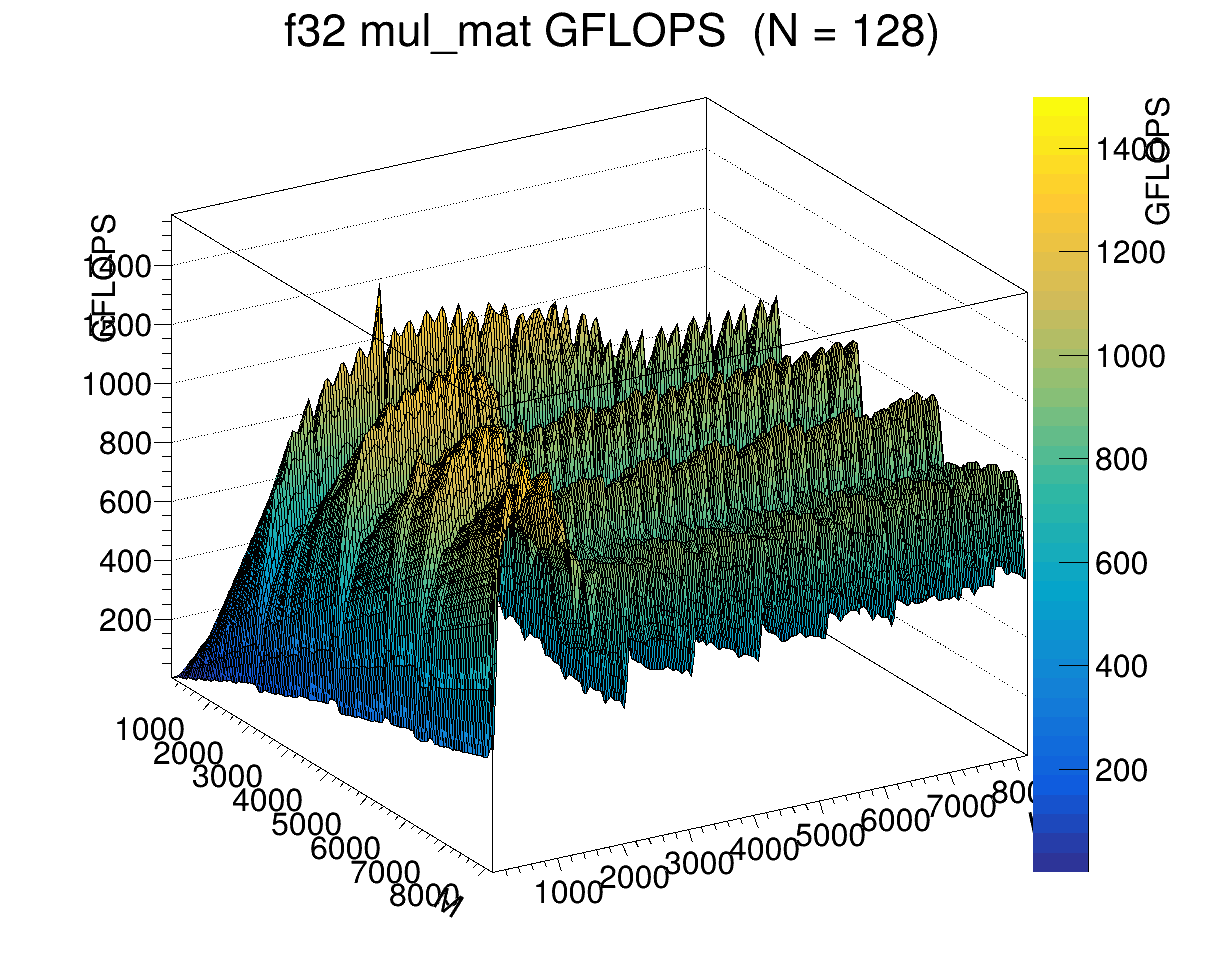

Although I had hoped to finish in 11 hours, the benchmark ended up taking about 15 hours, and I had to kill it early because I was leaving. The results are quite interesting. The following images show the GFLOPS achieved for N = 128 at different M and K sizes. Notice the wavy patterns and cliffs on the right-hand side, and that performance always drops at K = 8192. Clearly some cache effect is involved. What is interesting is that it shows up so cleanly only along the K dimension. Along the M dimension, FLOPS rise steadily and then suddenly drop off. I would guess different cache levels are involved, except there appear to be 4 distinct regions instead of 3 (and the matrix engine does not cache in L1D).

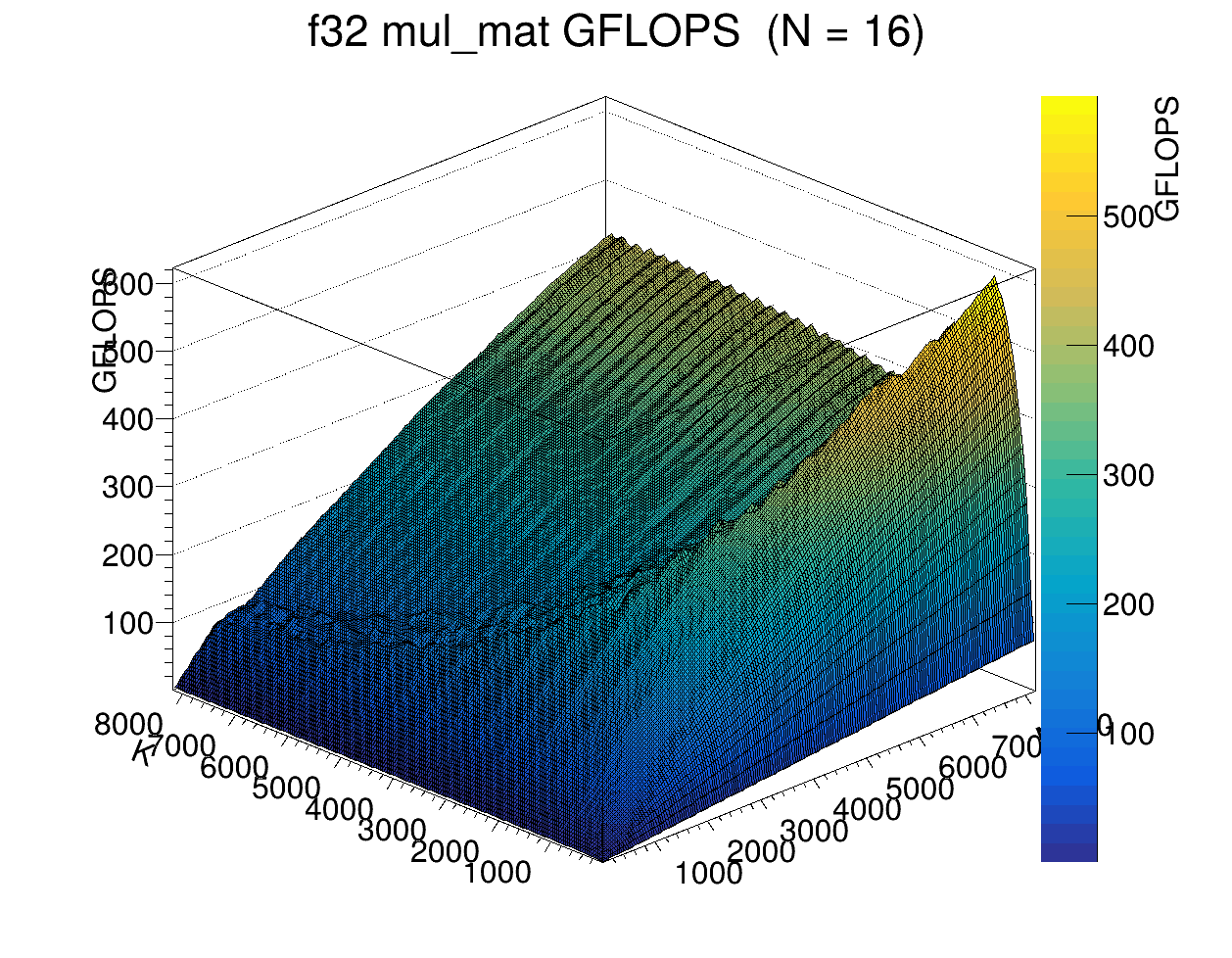

The performance characteristics at N = 16 look very different. There appear to be 2 curves here. The one in front seems to be limited by caching and compute latency. The one in back looks more like the classic memory bandwidth versus compute intensity tradeoff curve.

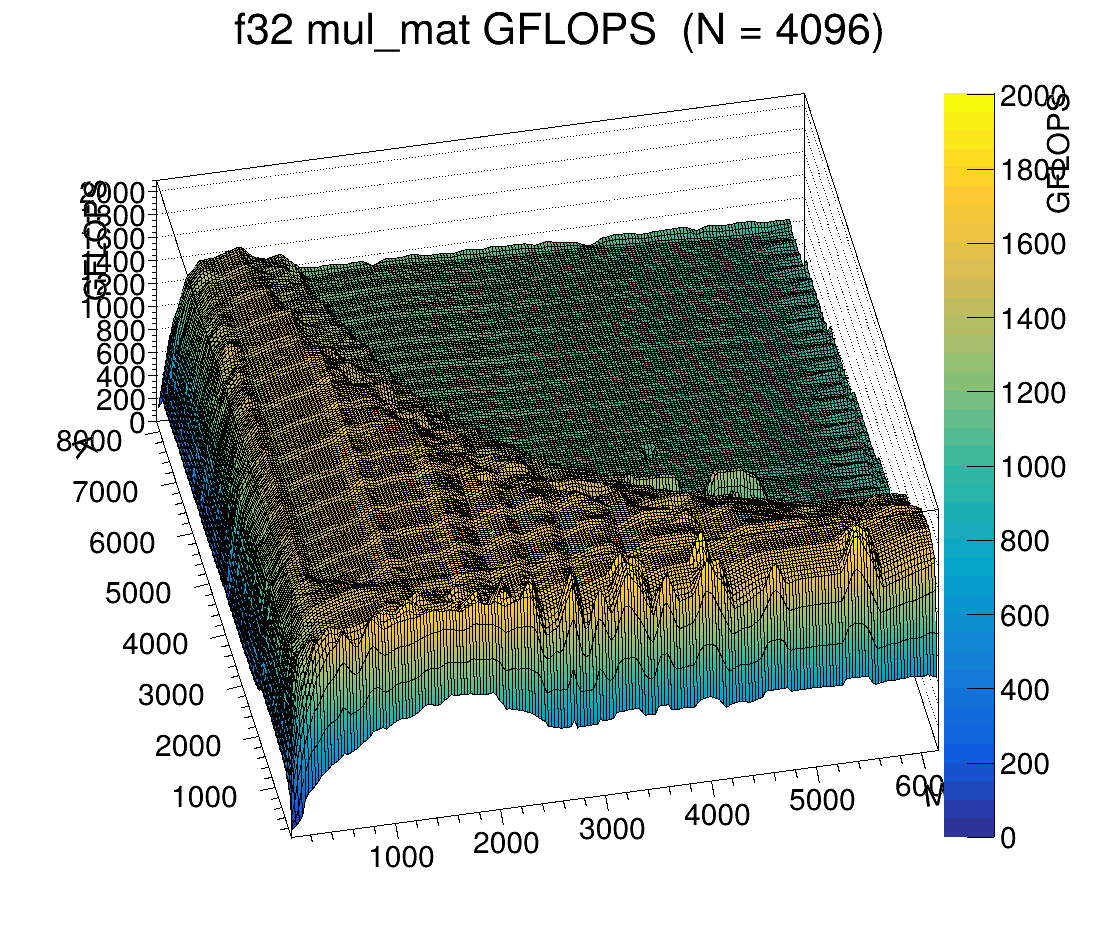

For large N (N = 4096), the on-die cache is saturated and we can see the boundary between cached speed and DRAM speed.

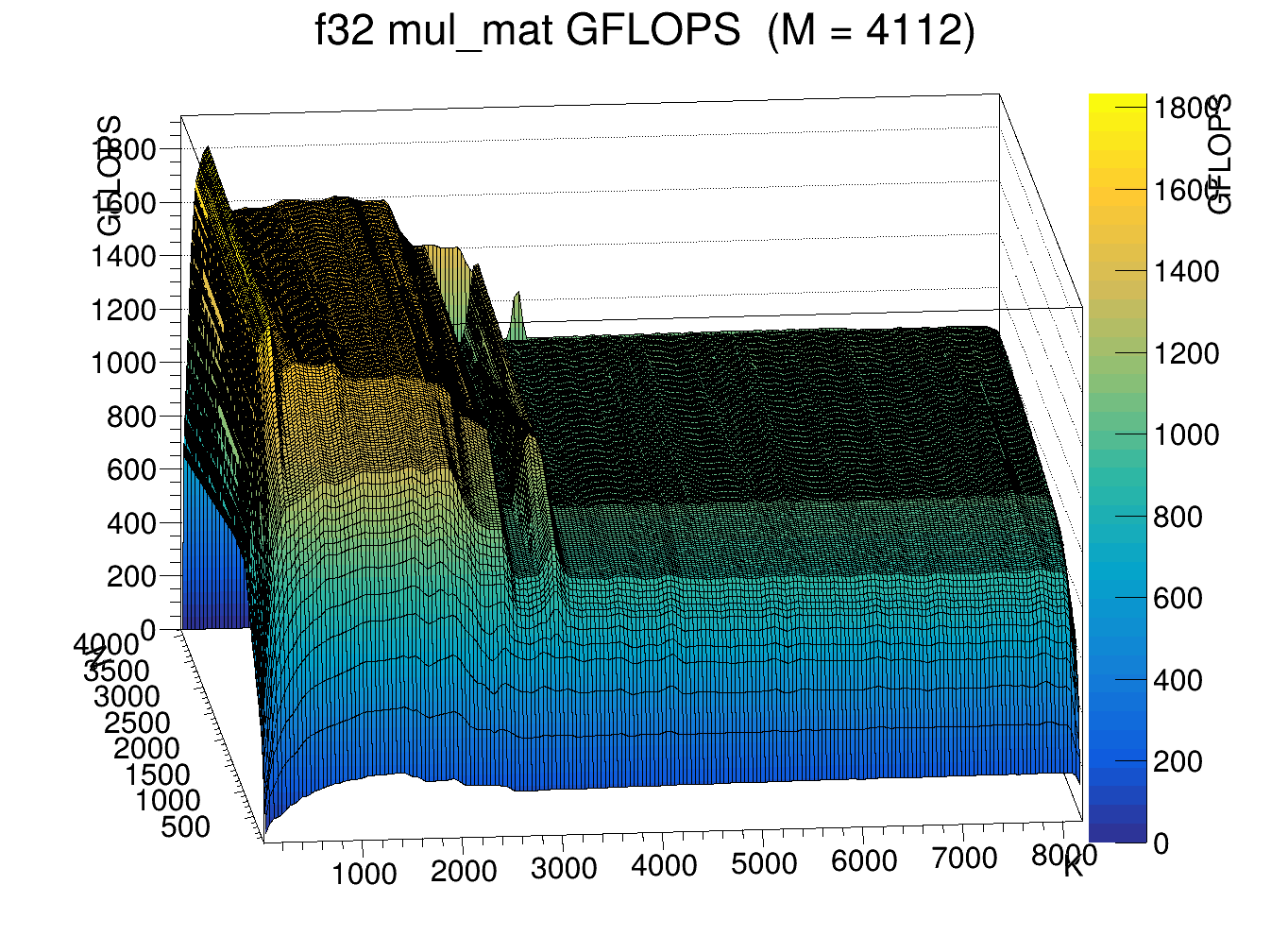

Looking at large M (M = 4112), there is a consistent performance drop around K = ~3000, which then recovers and drops again, along with some odd troughs. This is from early data before full random sampling.

Because of my matrix size calculation mistake, it is a bit awkward to isolate the effect of aligned versus unaligned N. But with some effort and a bit of awk, it can still be examined. For small matrix sizes, the extra partial tile is essentially a non-issue. A 144x144x16 matmul reaches 4.6 GFLOPS, while a 17x128x128 matmul reaches 4.4 GFLOPS, which is not much different (about 4% faster).

> awk -F, '$3==16 && $2 == 128+16 && $1 == 128+16{print $4}' < mul_mat_f32_perf.csv

4.6188777553505354

> awk -F, '$3 == 17 && $2 == 128 && $1 == 128{print $4}' < mul_mat_f32_perf.csv

4.4320175992276356

But at larger sizes, the effect is more noticeable. A 528x528x128 matmul runs at 274 GFLOPS, while a 512x512x129 matmul runs at 221 GFLOPS, a 23% difference.

> awk -F, '$3 == 128 && $2 == 528 && $1 == 528{print $4}' < mul_mat_f32_perf.csv

273.8809146469199

> awk -F, '$3 == 129 && $2 == 512 && $1 == 512{print $4}' < mul_mat_f32_perf.csv

221.47162633674154

Attempt 2

Attempt 2 is a re-run with the matrix size calculation fixed, along with a fixed kernel that rejects anything beyond the 2048th thread to keep code changes minimal. I removed the random sampling phase, increased the resolution of the N-dimension sweep, and reduced the 1.5s gate to 1.2s. This is not a huge change, but based on the previous results, memory-bound operations seem stable enough that noise is not severe.

A quick look at the GFLOPS plots shows a much stronger wavy pattern than in attempt 1 (see the N = 128 graph above). Given the pattern of peaks and troughs at certain multiples of 2^N, my hunch is that L2 aliasing is causing the performance dips.

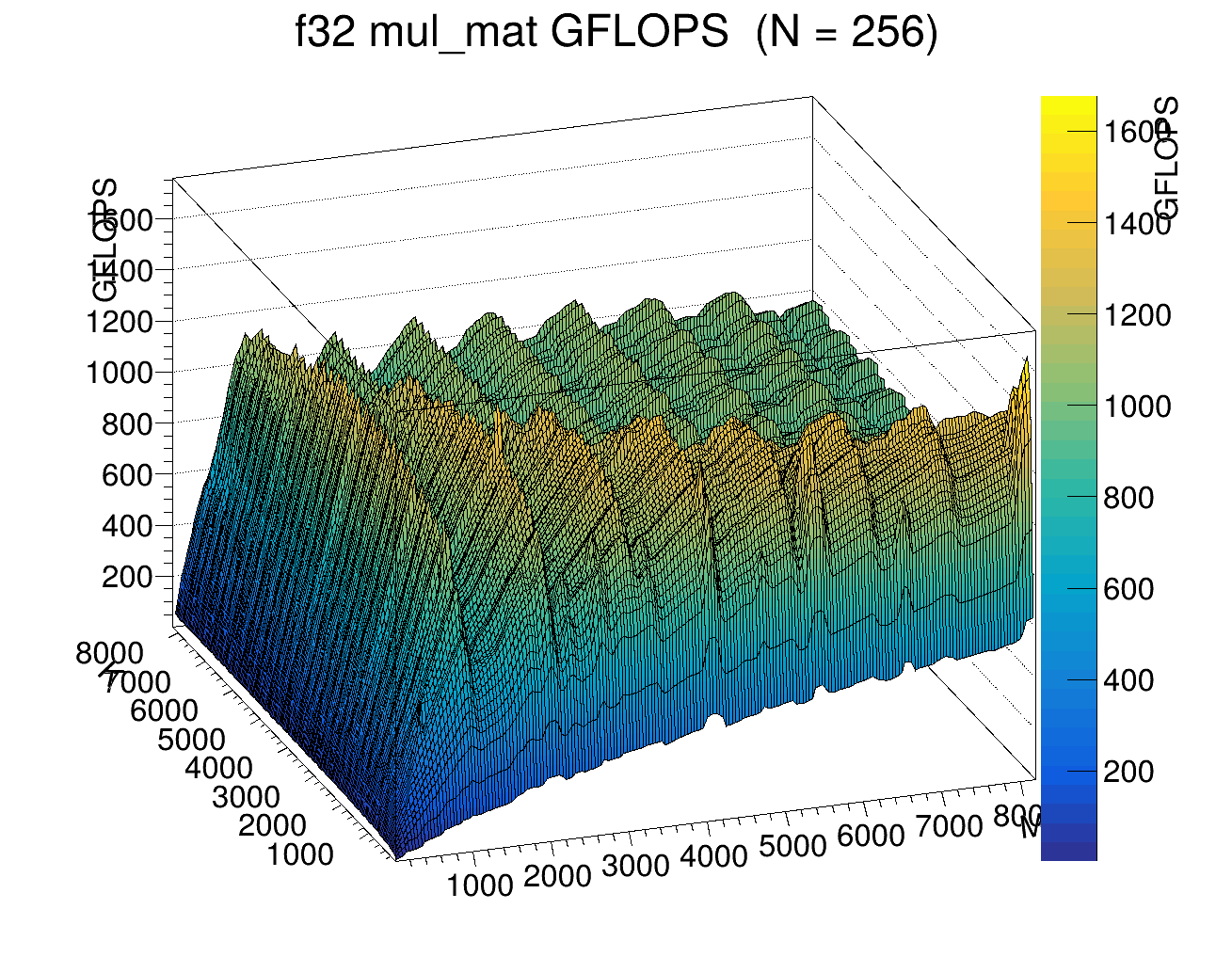

The same pattern shows up in the N = 256 graph as well.

I also made a quick WebM to show the performance landscape of the naive matrix multiplication kernel across the N dimension.

Data and visualization code are available at the following link:

The modified version of test-backend-ops that rusn the actual benchmark:

Good. Now I know what I am working with. I can start doing the fun work of making things fast.